Enterprise AI that runs entirely on your hardware.

No cloud. No third parties. No compromises.

Built With Our Proprietary Software

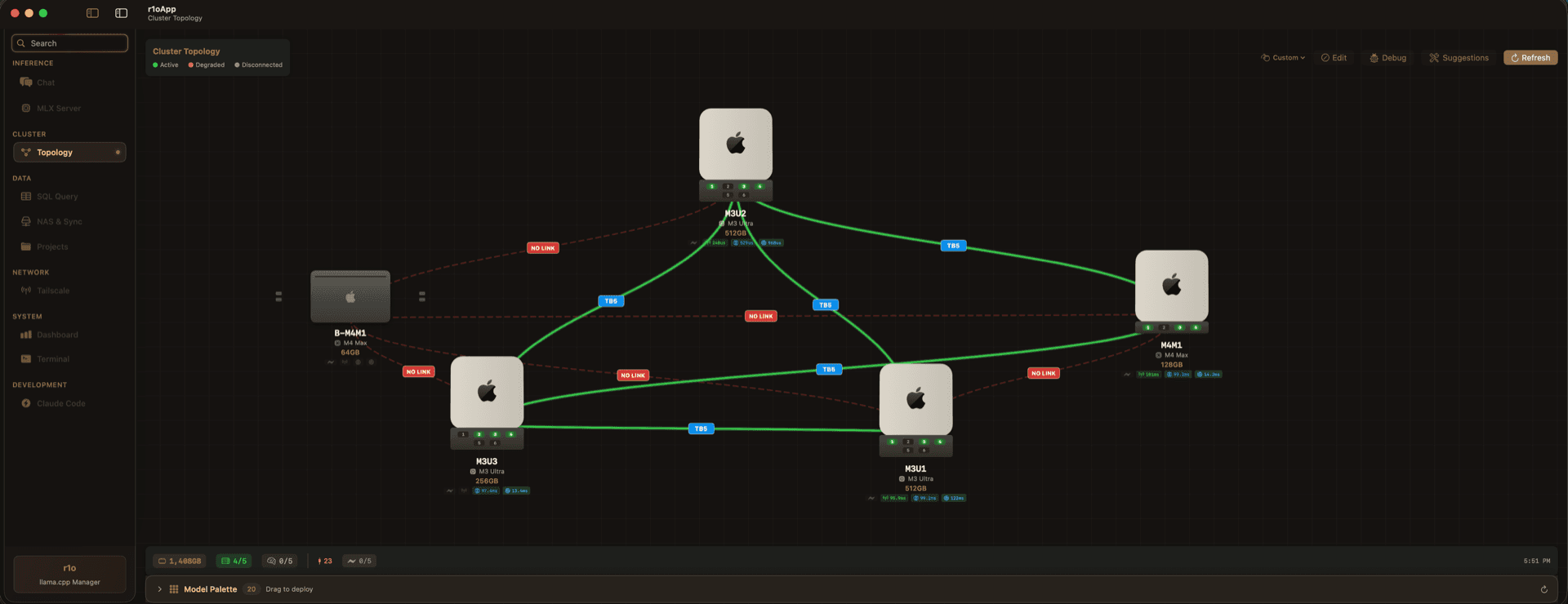

Your AI

command center

Powered by our proprietary cluster orchestration platform. Monitor models, track inference, and manage your entire AI infrastructure from a single pane of glass.

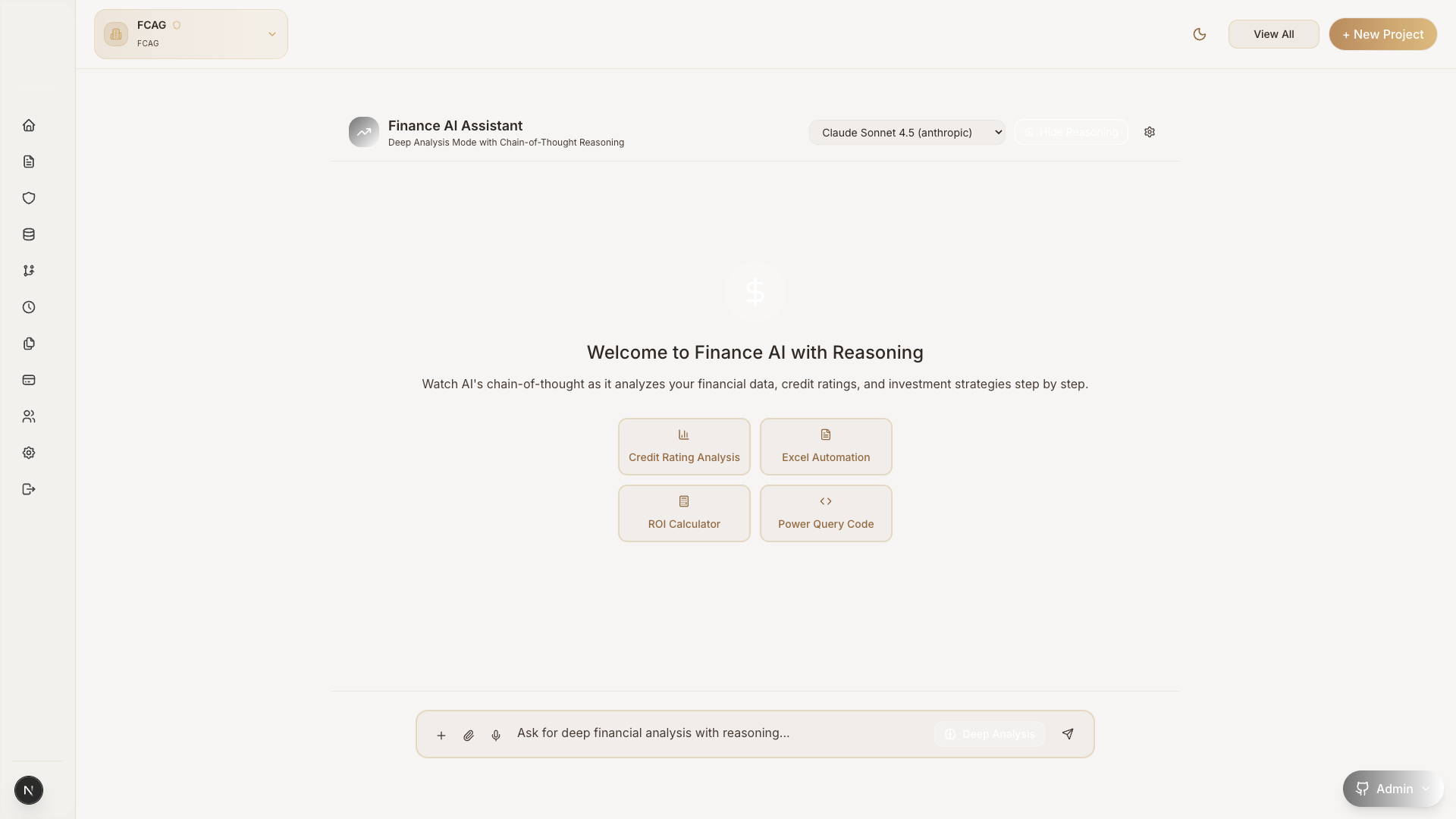

Conversational AI Interface

AI that knows

your business

A private AI assistant trained on your data. Ask questions about costs, contracts, documents, and operations — get instant, accurate answers without exposing a single byte to the cloud.

Every frontier model. Plus your own.

Cloud frontier through your own keys, or on-cluster MLX inference running on the Apple Silicon mesh we install on-site. No data leaves your perimeter unless you say so.

| Model | Provider | Reasoning | Vision | Tools | Context | Price ($/M) | Score |

|---|---|---|---|---|---|---|---|

Qwen3.5 397B-A17B (6-bit)On-cluster | fib0 Cluster | 262K | On-prem | 96 | |||

Kimi K2.6 (unquantized)On-cluster | fib0 Cluster | 262K | On-prem | 96 | |||

Kimi K2.5 (4-bit)On-cluster | fib0 Cluster | 262K | On-prem | 95 | |||

DeepSeek V4 Flash (8-bit)On-cluster | fib0 Cluster | 1.0M | On-prem | 95 | |||

DeepSeek V4 Flash (4-bit)On-cluster | fib0 Cluster | 1.0M | On-prem | 94 | |||

Qwen3.5 122B-A10B (NVFP4)On-cluster | fib0 Cluster | 262K | On-prem | 93 | |||

Qwen3-Next 80B-A3B (4-bit)On-cluster | fib0 Cluster | 262K | On-prem | 92 | |||

Qwen3-Coder Next (8-bit)On-cluster | fib0 Cluster | 262K | On-prem | 92 | |||

GLM-4.7 Flash (8-bit)On-cluster | fib0 Cluster | 203K | On-prem | 90 | |||

Qwen3-VL 30B-A3B (bf16)On-cluster | fib0 Cluster | 262K | On-prem | 90 | |||

GLM-4.6V (4-bit)On-cluster | fib0 Cluster | 131K | On-prem | 89 | |||

Nemotron-H 8B Reasoning (4-bit)On-cluster | fib0 Cluster | 33K | On-prem | 89 |

Score is fib0’s internal aggregate across reasoning, accuracy, speed, and creativity (0–100). Cloud pricing is the published per-million-token rate; on-cluster models bill against your hardware, not per-token. Catalog updated May 2026.

Your own custom,

private AI model.

Every deployment is branded to your organization. Your logo, your domain, your data — an AI platform your team will recognize as their own.

Enterprise ready.

Complete Privacy

The average data breach costs $4.88M (IBM, 2024). Your prompts, documents, and model outputs never leave your network \u2014 zero cloud API exposure.

Cost Efficient

Cloud APIs charge $10\u2013$75 per million tokens. Local inference costs ~$0.50 per million in electricity. Hardware pays for itself in under 7 months.

Apple Silicon Native

M3 Ultra runs 70B models at 95 tokens/sec on 270W \u2014 3x more power-efficient than NVIDIA H200. Silent, desk-friendly, zero driver configuration.

The future of AI is local.

Join forward-thinking companies using local AI to protect their data, control costs, and maintain competitive advantage.